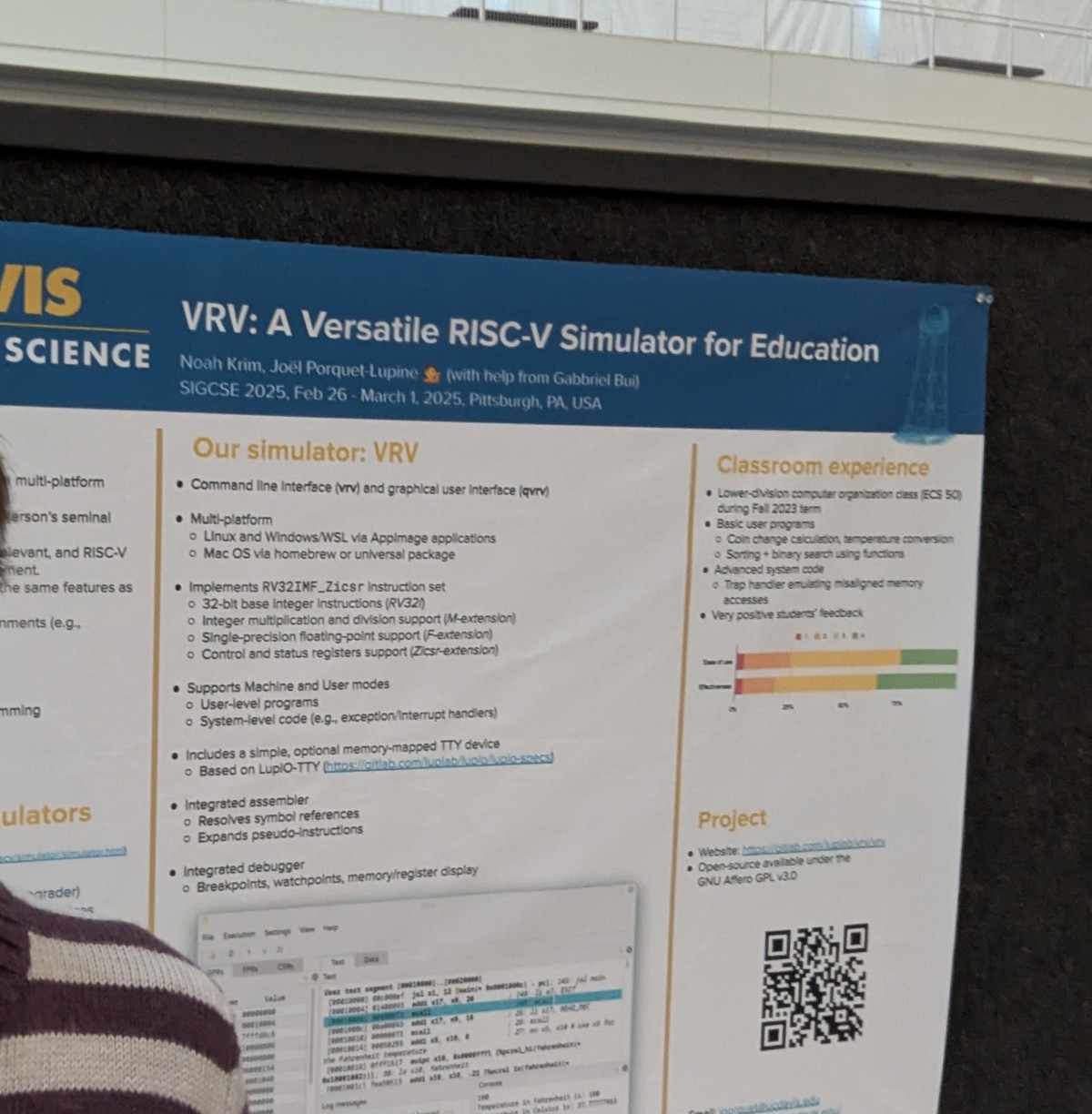

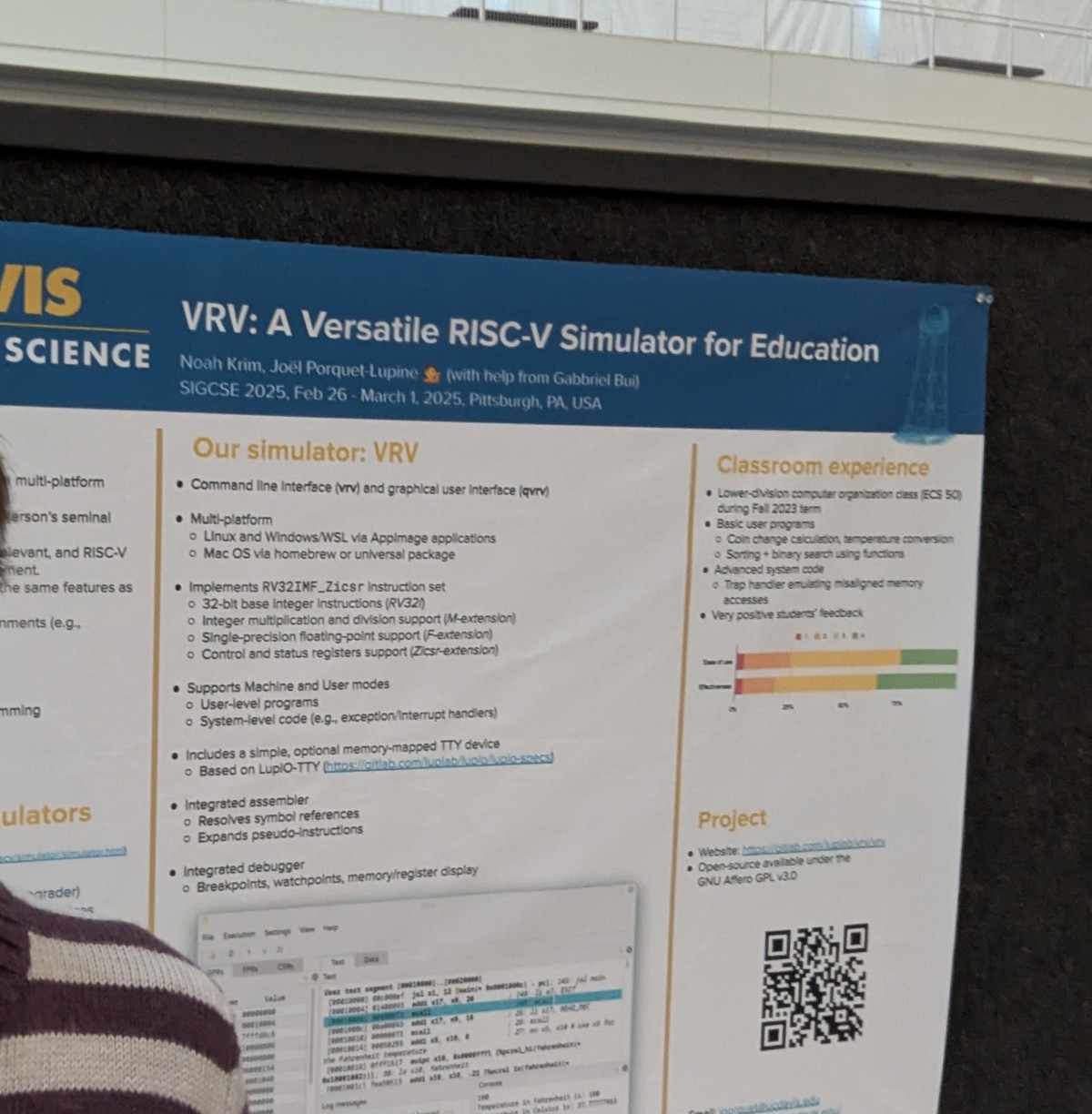

I presented our VRV (Virtual RISC-V) educational simulator at the ACM SIGCSE Technical Symposium. Big thanks to Noah Krim and Gabbriel Bui to make it happen!

Here is our status at the end of the fall quarter 2025.

Here is our status at the end of the summer 2025.

Here is our status at the end of the spring quarter 2025.

I presented our VRV (Virtual RISC-V) educational simulator at the ACM SIGCSE Technical Symposium. Big thanks to Noah Krim and Gabbriel Bui to make it happen!

Here is our status at the end of the winter quarter 2025.

Here is our status at the end of the fall quarter 2024.

Here is our status at the end of the summer 2024.

libvrv, our independent RISC-V

emulation library, which is the core of our VRV (Virtual

RISC-V) framework. Noah has now graduated so I

took over the development.Jhaydine is a 2nd-year computer science undergrad and is part of the UC LEADS program. She implemented some new features in LupBook.

Kushagra and Shengmin are both 3rd-year electrical engineering undergrads and participated in the CITRIS Workforce Innovation program. They implemented most of the LupIO devices in Verilog.

]]>Here is our status at the end of the spring quarter 2024.

libvrv), which Noah has started

implementing. This library provides a public API which is used by various

clients. I rewrote the command-line client (vrv-cli).